Transform Your Supply Chain Planning and Marketing Strategies with Google Cloud and SAP Integration

Transform Your Supply Chain Planning and Marketing Strategies with Google Cloud and SAP Integration

May 11, 2023 | Manju Devadas

Blog / Transforming SAP with BigQuery: A Strategic Guide to Data Analytics

ERPs |

Advantages with BigQuery |

|

Inefficient Real-Time Analytics: Limited capability for real-time data processing and insights. Complex Data Integration: Difficulty consolidating data from multiple SAP modules and external sources. Slower Decision-Making: Extended processing times for large data sets delay critical business decisions. |

Enhanced Customer Understanding: BigQuery’s advanced analytics provide deeper insights into customer behavior. Rapid Response to Market Changes: Real-time data analysis empowers businesses to react swiftly to market trends. Superior Predictive Capabilities: Leverage BigQuery’s machine learning for accurate predictions and forecasting. Accelerated Decision-Making: Faster query responses enable timely, data-driven business decisions. Flexible, Customized Analytics: Build tailored analytics solutions to address unique business challenges. |

As an SAP user like yourself, I have navigated the world of ECC and HANA, reaping their benefits and grappling with their limitations. Over the years, I have come to understand that while they offer valuable support for many aspects of our businesses, these systems often struggle to meet the growing demands of today’s data-driven landscape.

Over my three-decade-long career as a data analytics professional, I’ve walked the halls of countless organizations, working closely with teams that grapple with the complexities of SAP systems.

I have witnessed the frustration and setbacks that arise when trying to extract valuable insights from the data locked within these systems.

As someone who is deeply passionate about helping businesses make sense of their data, I can’t help but feel a personal connection to the struggles faced by my fellow professionals.

The journey has not been without its challenges. SAP systems, like ECC and HANA, are powerful tools, but their complexity often slows us down and prevents us from fully harnessing the potential of our data.

I’ve spent countless hours working alongside teams, attempting to find workarounds and solutions to overcome these limitations, only to see the same problems resurface time and again.

This experience has led me on a quest for a more advanced and agile solution that would not only streamline our data analytics processes but also revolutionize the way we work with data. When I first encountered Google Cloud’s BigQuery, I knew I had found the answer to many of the challenges we face with SAP systems.

The power and flexibility of BigQuery have been a game-changer for me and the organizations I’ve had the pleasure of working with. As we embrace this innovative platform, we are no longer held back by the constraints of traditional SAP systems, and I can finally see the teams I work with breaking free from the limitations that once held them back.

This article is my humble attempt to share my personal journey and experiences with SAP data transformation. I hope that, through these stories and insights, you too will be inspired to explore the possibilities that BigQuery and other advanced data analytics solutions can offer, empowering your organization to excel in the ever-changing, data-driven world.

From the first query ever done in the history of mankind, when a person asked another, “How many fruits do you have?” to the complex queries of businesses that generate huge amounts of data, the need for querying tools has been evident throughout the history of mankind.

As businesses grew and technology advanced, querying tools evolved to meet the demand for more complex data analysis. Early tools were simple and often required manual entry of data, making them prone to errors. But as businesses generated more data, the tools had to become more sophisticated.

In the 1960s, IBM introduced the first relational database management system (RDBMS), which organized data in tables with predefined relationships. This revolutionized data management, but querying tools were still limited. It wasn’t until the 1970s that the Structured Query Language (SQL) was developed, which enabled more complex queries to be performed on relational databases.

Over the years, various types of databases and querying tools emerged, each with its strengths and weaknesses. Some specialized in real-time data analysis, while others were better suited for historical analysis.

But as data volumes exploded, traditional querying tools struggled to keep up. Queries that once took seconds or minutes to run were now taking hours or even days. This was due to the limitations of traditional disk-based storage systems, which were slow and inefficient at handling large volumes of data.

Enter Google Cloud’s BigQuery. Unlike traditional querying tools, BigQuery was designed from the ground up to handle massive amounts of data at lightning speeds. It does this by leveraging Google’s expertise in data center design and using a columnar storage format that is optimized for analytical queries.

With BigQuery, businesses can perform complex queries on petabytes of data in seconds, allowing them to gain valuable insights and make data-driven decisions faster than ever before. Some of the world’s largest companies, including Spotify, Nielsen, and The New York Times, use BigQuery to power their data analysis.

One interesting example of the exactitude of BigQuery’s impact is the online gaming company Zynga. With millions of daily players, Zynga generates massive amounts of data on player behavior. By using BigQuery to analyze this data, Zynga was able to identify patterns in player behavior and make targeted changes to its games, resulting in increased engagement and revenue.

The story of BigQuery is a tale of the evolution of querying tools from the first query ever done to the modern-day need for lightning-fast data analysis. With its ability to handle massive amounts of data at lightning speeds, BigQuery is helping companies make data-driven decisions faster and more efficiently than ever before.

Traditional SAP systems can sometimes struggle with handling large amounts of data, particularly when complex queries and reporting come into play. You may have yourself experienced slow response times and limitations in analyzing large datasets.

BigQuery’s serverless architecture tackles this issue head-on by allowing us to scale our data analytics efforts without worrying about infrastructure constraints.

This has resulted in significantly faster query performance, giving us the ability to analyze massive datasets in real-time and generate insights at a pace that keeps up with our business needs.

One of the key benefits of using BigQuery for SAP users is the ability to consolidate data from various SAP modules seamlessly. For example, a manufacturing company might use BigQuery to analyze data from its SAP system to optimize production schedules.

This approach of consolidating data from various SAP modules in BigQuery not only improves the efficiency of the data analysis process but also allows organizations to uncover new insights by connecting previously siloed data sources.

One of the challenges I’ve faced with SAP is the delay in accessing up-to-date information from my data.

With BigQuery’s real-time analytics capabilities, it is possible to ingest and process streaming data in real-time. This translates into having instant access to insights as data is generated, allowing us to make more informed decisions and respond rapidly to evolving business conditions.

For SAP users, this means a dramatic improvement in agility and responsiveness, which can be crucial in today’s fast-paced business environment.

BigQuery has the ability to analyze vast datasets simultaneously, eliminating the bottlenecks that often hinder traditional SAP systems. Complex queries can be executed quickly and efficiently, enabling users to explore their data with ease and discover previously hidden patterns and trends.

When I think about supply chain management, I recall the times when we could only measure lead times and attempt to optimize them. But now, with BigQuery, we can go much further. We can analyze the intricate relationships between lead times, supplier performance, product quality, and even external influences such as geopolitical risks or weather data.

This level of granularity simply wasn’t possible before, and it’s opened up a whole new realm of insights and optimization opportunities.

Discover how Pluto7’s data platform solutions can help you harness the power of BigQuery to optimize your supply chain management and unlock valuable insights.

In the realm of marketing and sales, I’ve noticed a similar transformation. In the past, we could measure the success of marketing campaigns by tracking conversions, but we could not understand the nuances of customer behavior and preferences. BigQuery has changed all that by enabling us to blend CRM data with customer behavior data from sources like Google Analytics.

L-Nutra had a simple job – they wanted to understand their customers well enough to offer personalized product recommendations. With most of their customer data in fragmented systems, they had no idea where to start.

We worked with them to consolidate their data and create a data foundation. They could now get a unified view of their customer data by centralizing their data.

And that opened multiple doors. For example, they could now run a wide variety of queries in just seconds. They could analyze the relationship between customer demographics and purchasing habits or identify seasonal trends in customer behavior.

Today, with L-Nutra’s machine learning-driven automated process, they can analyze streaming data and generate insights in real-time, pushing the boundaries of innovation at an incredible pace. By applying various data strategies, they can understand their customers better and tailor products to serve their unique needs.

Data integration has always been a critical aspect of working with SAP systems, as it often requires blending data from various sources, such as external datasets, other cloud services, and even non-SAP systems.

BigQuery’s native integration with Google Cloud services and its support for a wide range of data formats have made this process more seamless. Now, you can effortlessly import data from your SAP system into BigQuery, combine it with external datasets or other Google Cloud services, and share the resulting insights across your organization.

Insights generated from the market, typically outside business systems, have traditionally been out of reach.

However, this kind of data integration (blending of various data sources) is now possible through Google Cloud Cortex, which provides over 50 pre-defined BigQuery operational data marts for SAP out-of-the-box. These data marts cover functional areas like purchasing, sales & distribution, and master data.

Google Cloud Cortex also offers a clear approach for replicating data from SAP into BigQuery. Unlike traditional ETL approaches, the BigQuery Connector for SAP automatically suggests fields, field names, and data types for the target table based on the source data.

The BigQuery Connector for SAP takes care of many tasks all by itself.

For example, it automatically handles the complex, multi-step process of transforming SAP data types for use in BigQuery. This includes mapping data-type transitions between the SAP and BigQuery environments, creating a target table schema on BigQuery for the transformed data types, building the target BigQuery table, and even adapting as new data types appear in your SAP environment.

BigQuery’s built-in support for advanced analytics functions and machine learning has unlocked new possibilities for tackling complex problems and discovering hidden patterns within data.

But if complete data transformation is what you are seeking to achieve, Google Cloud’s potential goes far beyond BigQuery.

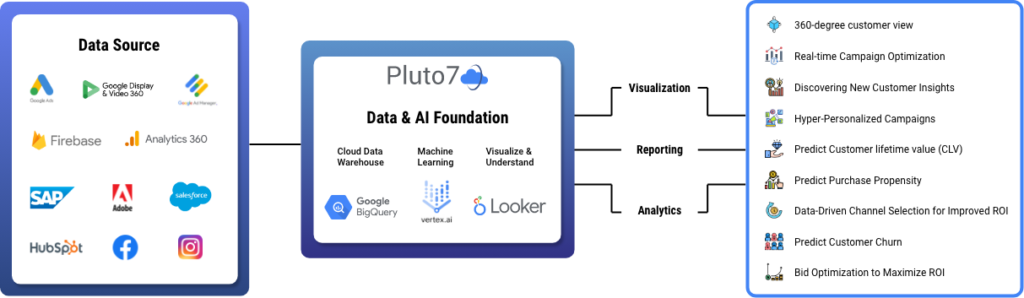

Vertex AI, for instance, is a powerful and easy-to-use suite of machine learning tools that allows us to build, deploy, and scale AI models quickly and efficiently. At Pluto7, it has enabled us to deliver unparalleled benefits to our clients by incorporating these cutting-edge technologies into our data platform, which sits on Google Cloud.

As a platform built on top of Google Cloud, our data platform is designed to deliver industry-specific solutions by leveraging Google Cloud’s vast array of resources. Some examples of the impact our platform has had on various industries using a combination of BigQuery, Vertex AI, and other industry-specific solutions include the following:

Data warehousing is often costly and requires thorough planning and careful spending. BigQuery’s pay-as-you-go pricing model and automatic discounts for long-term storage offer a cost-effective solution that addresses these concerns.

Google Cloud has also recently announced new enhancements to BigQuery, including computing capacity autoscaling, which adds fine-grained compute resources in real-time to match workload demands, ensuring you only pay for the compute capacity you use. Additionally, compressed storage pricing allows you to pay for data storage only after it’s been highly compressed, reducing storage costs while increasing your data footprint.

In the face of an ever-changing business landscape, the significance of decision intelligence and decision support has grown exponentially. The modern context calls for organizations to adapt and excel in the following key aspects of decision-making:

BigQuery plays a pivotal role in addressing these challenges by organizing extensive datasets into tables that can be analyzed swiftly, promoting contextual decision-making. It enables rapid querying and analysis of large datasets, allowing organizations to make data-driven decisions swiftly. Its built-in caching mechanism and optimizations further improve query performance, ensuring users can access real-time insights.

It also offers a wide range of connectors and data transfer services that simplify integrating various data sources, including SAP systems, social media, IoT devices, and more. Its federated querying capabilities allow users to analyze data from multiple sources simultaneously, providing a holistic view of the organization’s data landscape.

Transferring data to BigQuery is as much a business question as it is a technical one. Before moving or even looking at the tools available, it’s essential to consider the expected outcomes. For example, if you’re looking to optimize demand forecasting with external datasets, you may already have a good idea of the data sources to consider (including private and public sources).

Before moving data to BigQuery, it’s crucial to ask a series of questions to ensure a smooth migration process:

Google Cloud Cortex is an open-source framework that significantly simplifies data movement for SAP and Salesforce data sources. Specifically tailored for core supply chain use cases that demand precise outcomes, Cortex serves as a valuable tool for supply chain transformation.

It includes new data marts, semantic views, and templates of Looker dashboards to support operational analytics and relevant metrics for inventory management. These are designed to help overcome challenges and provide a starting point for customers to adapt further to their specific business needs.

Google Cloud Cortex, with its robust feature set, empowers businesses to dive deep into their data and extract meaningful insights. For example, it enables organizations to:

When deciding between an ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) approach, it’s important to consider your organization’s specific needs and requirements.

For instance, if you have an SAP system with a large volume of data that requires significant transformation before analysis, ETL may be the better option. It would involve extracting data from SAP, transforming it into the desired format, and then loading it into BigQuery for analysis.

On the other hand, if your SAP data is already well-structured and requires minimal transformation, you might opt for an ELT approach. This would involve extracting data from SAP, loading it into BigQuery, and then performing any necessary transformations within BigQuery itself.

For example, if you have data from SAP’s Sales and Distribution (SD) module that needs to be combined with data from the Finance (FI) module, you could load both datasets into BigQuery and perform the transformation there.

Data replication options include replicating on a table-by-table basis and replicating pre-built logic.

Table-by-table replication involves moving each table from the SAP system to BigQuery individually. This approach is suitable for organizations that require granular control over the migration process and want to move only specific tables.

Replicating pre-built logic involves moving a set of predefined transformations and calculations from the SAP system to BigQuery. This approach is ideal for organizations that have complex business logic built into their SAP system and want to maintain consistency during the migration.

It’s all about understanding your requirements and determining which option will yield the most value for your business.

Let me give you an example to illustrate when each of these methods comes in handy.

Imagine you’re running a company with a strong focus on analyzing customer behavior and improving customer satisfaction. Your primary concern is migrating a select set of tables from your SAP system, like those containing customer data, order history, and feedback. In this scenario, table-by-table replication works best, as it allows you to selectively move the most relevant tables to BigQuery, keeping the process focused on your core objective.

On the other hand, let’s say you’re in charge of a large organization with intricate business processes built into your SAP system. You’ve got multiple departments, each with its own set of predefined calculations and transformations that need to be preserved during the migration. In this case, replicating pre-built logic is the way to go. This approach ensures that your existing business logic remains intact in BigQuery, maintaining consistency across your organization and minimizing disruptions to your workflows.

At the end of the day, it’s all about aligning the migration approach with your organization’s goals and priorities. Our team at Pluto7 is dedicated to working closely with you, understanding your needs, and guiding you towards the most suitable replication method for your SAP data migration to BigQuery.

BigQuery’s recently launched native support for Change Data Capture (CDC) is a powerful feature that allows you to process and apply updates and deletions to your BigQuery tables in real time. It uses the BigQuery Storage Write API to achieve this, making it possible to replicate data from transactional systems into BigQuery seamlessly.

As a result, you can easily analyze data from both transactional and analytical systems, enabling better cross-functional insights and decision-making.

With CDC, you can efficiently process data changes without significantly increasing data ingestion time. This means your dashboards and reports are updated with near real-time data, empowering you to make data-driven decisions swiftly.

For instance, one of our retail clients found BigQuery’s CDC capabilities to be incredibly valuable for their inventory management. Like any other retail brand, they need to keep a close eye on stock levels across multiple store locations to make timely replenishment decisions and avoid stockouts.

By leveraging BigQuery CDC, they can effectively replicate their transactional data from their POS (point-of-sale) systems into BigQuery, enabling seamless analysis alongside their other analytical data sources.

To truly harness the power of BigQuery, optimization is key. Techniques like partitioning, clustering, and materialized views can help accelerate query performance, while splitting complex queries into smaller ones can ensure efficient processing. Optimizing join patterns is another way to enhance BigQuery’s performance.

At Pluto7, we’ve had the privilege of working with numerous customers across diverse industries and supply chain complexities, all leveraging the power of BigQuery. We’ve employed various techniques, like the ones mentioned above, to help our clients extract valuable insights from their data while keeping costs under control.

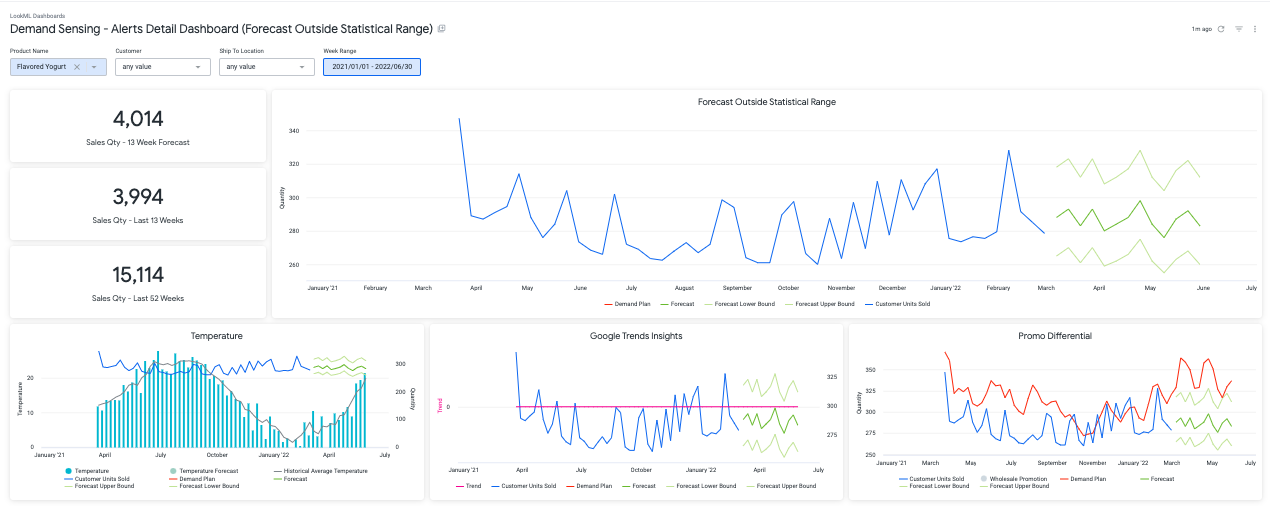

Integrating BigQuery with Looker brings the power of real-time data visualization to your organization. This combination enables you to set up interactive dashboards that are always up-to-date, helping you make informed decisions with confidence.

For example, the above dashboard provides in-depth insights into sales volumes over a given period, organized by shipping destination, product name, and customer, reflecting customer preferences and habits. It also uncovers trends in various product attributes, offering a comprehensive understanding of current market demands. The dashboard is highly interactive, allowing users to apply different filters to tailor the displayed information in the tiles according to their specific needs.

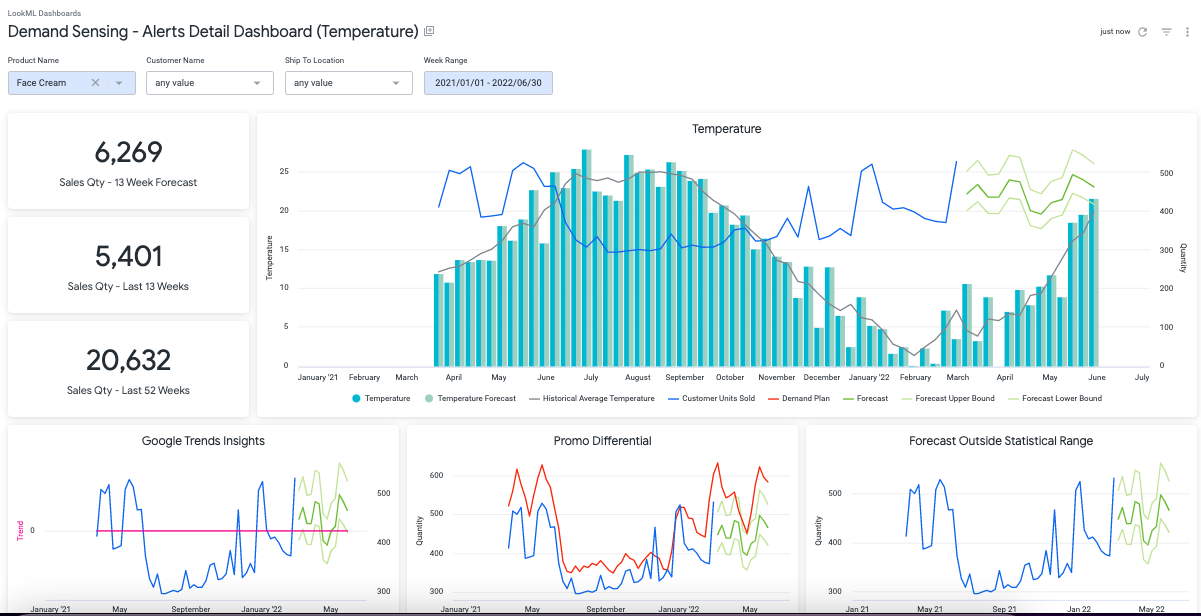

Here is another example. The above dashboard leverages Google’s weather data to offer valuable insights into sales volumes over a selected period, categorized by shipping destination, product name, and customer in relation to temperature. Users can personalize the dashboard by applying filters for location, customer, product, and date range, ensuring only pertinent information is displayed.

Our work in creating such dashboards has proven to be immensely beneficial for our clients, demonstrating our ability to deliver tailored, data-driven solutions to meet their unique needs.

But more importantly, it has helped our clients improve the conversation in their boardrooms, replacing ‘hunch-driven actions’ with ‘data-driven insights’.

BigQuery for SAP offers a wide range of in-depth use cases, covering various aspects of business operations such as supply chain, sales, and marketing. Here are some examples:

Analyzing Promotional Impact: With BigQuery, organizations can investigate the correlation between promotional activities and product demand, enabling them to determine the success of marketing campaigns and better allocate resources for future promotions.

Temperature-Influenced Demand: By integrating BigQuery with SAP data, businesses can examine the effect of temperature on product demand, allowing them to adjust their inventory and distribution strategies based on seasonal fluctuations and weather patterns.

Uncovering Emerging Trends: Utilizing BigQuery, companies can identify emerging trends in product demand and customer preferences, which can guide them in making data-driven decisions related to product development, pricing, and marketing strategies.

Tracking Lead Generation: BigQuery can help businesses monitor and analyze lead generation and conversion rates, providing insights into the efficiency of sales and marketing efforts and identifying areas for improvement.

Assessing Sales Pipeline Health: By examining sales pipeline data, organizations can gain valuable insights into the overall health of their sales funnel, pinpointing bottlenecks and opportunities for growth.

Ad Spend Optimization: By integrating Google Analytics data with BigQuery, marketers can gain insights into the effectiveness of their advertising campaigns and make data-driven decisions on optimizing ad spend for maximum ROI.

Tailoring Marketing Strategies: With the help of BigQuery, businesses can analyze customer behavior and preferences, enabling them to develop more targeted marketing campaigns and promotional offers that resonate with their audience.

The ‘new normal’ has become a part of our vocabulary, and it is here to stay. To deal with this unpredictability, companies must rely on data-driven insights that take the guesswork out of the equation.

BigQuery is an essential tool that helps businesses survive and thrive in the modern era. It offers unparalleled opportunities to gain actionable insights across supply chain planning, factory planning, marketing, and sales.

In the following section, we will explore various examples demonstrating the power of BigQuery in driving data-driven decision-making.

The Problem:

A clothing manufacturer needs to predict the demand for their winter collection to make informed decisions regarding production levels, inventory management, and distribution strategies. Inaccurate forecasting can have significant consequences, such as overstocked inventory, wasted resources, and potential loss of sales if they cannot meet customer demand.

This challenge is further complicated by the need to consider various factors affecting demand, including customer preferences, seasonal trends, and external factors like weather patterns and economic indicators – forces beyond their control.

The company has to not only analyze historical sales data to identify patterns in customer behavior but also anticipate how external factors might impact demand in the upcoming season.

The Solution: Leverage external datasets for unprecedented market intelligence

To make this information more actionable, the company can create a real-time dashboard that connects internal and external data, providing a clear picture of their inventory status and opportunities for optimization.

For instance, if the dashboard indicates that search trends for a specific winter coat are increasing in a particular region, the manufacturing company can quickly adjust its inventory allocation to ensure adequate stock levels.

The Problem:

A retail chain struggles to maintain optimal inventory levels across its stores, leading to overstocked or understocked situations, which results in lost sales, higher carrying costs, and reduced customer satisfaction. They need real-time visibility into their inventory across multiple locations to make informed decisions on stock allocation, distribution, and replenishment.

The Solution: Set up an inventory control tower

The Problem:

A manufacturing company faces increasing production costs due to excessive waste and inefficient resource utilization. They discover that 10% of their manufactured products are being wasted due to damage during manufacturing. The company needs a data-driven approach to identify the source of the problem, implement a process to ensure quality, and ultimately reduce waste and cut costs.

The Solution: Set up a digital twin

The Problem:

A consumer goods company struggles to measure the return on investment (ROI) of its marketing efforts. They have multiple marketing channels, including online advertising, social media campaigns, and in-store promotions. They need a data-driven approach to consolidate, analyze, and assess the effectiveness of these various channels, allowing them to optimize their marketing strategies and maximize ROI.

The Solution: Set up a Marketing Data Warehouse on BigQuery

Based on the insights gained from BigQuery and the marketing data warehouse, the company can make data-driven decisions to optimize its marketing strategies, allocating resources to high-performing channels, improving targeting and messaging, and maximizing overall ROI.

Running SAP on Google Cloud Platform (GCP) with Pluto7 can significantly reduce your Total Cost of Ownership (TCO) due to several factors:

Pluto7’s managed services can further offload the maintenance burden, allowing you to focus on your core business.

Yes, Google Cloud is SAP enterprise-ready. Google Cloud and Pluto7, as a trusted SAP and Google Cloud partner, provide a robust, secure, and high-performance infrastructure for running your SAP applications. With Pluto7’s extensive experience in SAP consulting and GCP, you can ensure seamless integration, migration, and management of your SAP workloads on Google Cloud.

The right GCP architecture for meeting business SLAs depends on your specific needs and requirements. Pluto7, with its expertise in both SAP and GCP, will work with you to design a customized architecture that meets your business goals and SLAs. Key factors to consider include:

Migrating your SAP applications to GCP can be a complex process. The steps would include the following:

Integrating Google native technologies with SAP can help you unlock new capabilities and insights. Pluto7 can assist you in seamlessly integrating Google Cloud services, such as BigQuery, AI Platform, and Cloud Functions, with your SAP applications. This integration enables you to leverage advanced analytics, machine learning, and automation to optimize your SAP processes and gain a competitive edge.

Pluto7, a Google Cloud Premier Partner and SAP PartnerEdge Silver Partner, offers end-to-end managed services for your SAP services on GCP including migration planning and execution to ongoing operations, maintenance, and optimization.

GCP provides a robust and secure foundation for your SAP applications, with built-in security features and compliance certifications. Pluto7 can help you ensure security, risk, and compliance on GCP by:

Pluto7 offers a comprehensive suite of services to support your SAP and BigQuery integration journey. The offerings cover the entire data transformation process, from migration to leveraging the power of Google Cloud Cortex. Pluto7’s specialized services include:

Pluto7 brings a wealth of experience, expertise, and certifications to ensure the success of your SAP and BigQuery integration. Here’s why you should choose Pluto7:

By choosing Pluto7 for your SAP and BigQuery integration, you can trust that you’re partnering with industry experts who will guide you through the entire data transformation journey, easing your challenges every step of the way, and ensuring a successful implementation.

Modern companies must constantly adapt and innovate to stay ahead. Leveraging powerful tools like BigQuery is a critical step in this journey, enabling organizations to gain a competitive edge by leveraging data-driven insights for revenue optimization and continuous innovation.

BigQuery, when paired with the expertise of a trusted partner like Pluto7, can drive rapid growth and help businesses outpace industry benchmarks. This is particularly evident in the retail and CPG industries, where many of our customers have harnessed BigQuery to achieve better demand forecasting, inventory management, and marketing ROI optimization.

At Pluto7, we take pride in our commitment to staying on the cutting edge of technological advancements. We maintain a strong, ongoing relationship with the Google team, which allows us to continuously upgrade our knowledge and stay ahead of the competition. Our team members consist of experts with decades of experience in data transformation, ensuring we have the expertise to tackle even the most complex data challenges.

By placing emphasis on agility, innovation, and data-driven insights, your business is poised to outperform competitors and capitalize on the opportunities that lie ahead. Embrace the transformative power of advanced data analytics and watch your organization flourish in a dynamic and rapidly evolving market landscape.